As someone who runs a Linux server, I have quickly learned to value stability. A good server software is fully predictable, it just churns away quietly, most of the time you can even forget it's there. This paints a rather dull picture, but servers are meant to be like that. On the other hand, of course, Linux tends to attract people who like to tinker, to explore, to improve. After all, Linux systems are highly modular, and hence a prime target for tinkering. When those two different worlds collide, arguments and controversies may arise.

When I was choosing the operating system for my first server, I went with Ubuntu Server. I liked the ideas of easy setup and automatic security updates. However, I didn't quite realize that things would be changing so much. Every dist-upgrade brings new surprises: daemons that collect my data for questionable reasons (whoopsied), or consume tons of resources for no gain at all (I'm looking at you, plymouthd!) I've had startup scripts silently converted to upstart jobs, X Sessions being forcibly started, even despite passing the text parameter to the kernel, and having runlevel 3 selected as default. Looking back, Ubuntu wasn't a good choice for my server. Now I've decided to hold back on upgrades to avoid getting systemd.

Systemd was meant to be a superior replacement for the aging SystemV Init, but it didn't stop at that. It fancied itself a "system management daemon" and has kept on growing ever since, adding functionality, taking over other packages (udev, dbus, syslog, wireless-tools, and lately even cron), and having clashes with the kernel folk. Meritorical criticism of systemd includes running too much code on PID 1 (when PID 1 crashes, it takes down the whole system), using binary logging (which is more difficult to recover), and requiring reboots for upgrades. Systemd is also criticized for not complying with the Unix philosophy of "doing one thing and doing it well". If it were to comply, it would have to be broken up into several smaller utilities, and the people behind systemd would not like that, as it would diminish their hold on the Linux world.

One more subtle thing systemd is doing is encouraging other programs to use the systemd API, so that they can notify the system that they had started up correctly. This establishes a binary dependency on the systemd libraries, and may cause an entire package to depend on systemd as a result. In Debian you cannot install GNOME without having systemd, and you cannot use the GNU Image Manipulation Program without having systemd installed on your system as well.

The Debian Technical Committee decided to solve this problem by making systemd the default init daemon for Debian. There was something odd about that vote: the discussion was supposed to consider every init system (including both openrc and sysvinit), but quickly devolved into a "systemd vs. upstart" argument, with the committee equally divided between the two options. The way this tie was broken is conspicuous at least, with Ian Jackson one day demanding the resignation of the committee's chairman, and then suddenly "taking a break from the committee", thus allowing the systemd proponents to win. Some suspect outside influence, which is certainly possible. Valve specifically chose Debian over Ubuntu for the base of its SteamOS in order to avoid upstart and its "troublesome" license.

Another theory says that there may be a "submarine patent" on something that systemd uses, and that it could be used to bring down Linux once the systemd adoption is high enough. This claim sounds far-fetched, but those who remember the SCO vs. Linux case are not too quick to dismiss it entirely.

My reasons for shunning systemd are mostly pragmatic: the system is too "new and exciting", and hasn't been tested extensively enough for a program that runs as PID 1. I'll be happy to make the switch two years after the entire Linux world happily standardizes on it, but not sooner. Meanwhile, I intend to migrate to Gentoo as soon as I lay my hands on some new hardware. Hopefully it will prove much less "exciting" than Ubuntu did.

| Distribution |

Init System |

| Ubuntu | upstart/systemd |

| Linux Mint | systemd |

| Debian | systemd |

| Fedora | systemd |

| CentOS | systemd |

| OpenSUSE | systemd |

| Mageia | systemd |

| ArchLinux | systemd |

| Slackware | SystemV Init |

| Gentoo | OpenRC |

Long before smartphones became popular, my cell phone has always been furfilling one important function: waking me up in the morning. Some phones handled this tasks better than the others, but they were all invariably infallible. The Nokia 6600 treated the alarm clock task especially seriously: if the alarm was set, it would go off, no matter what. If the phone was switched off, it would turn itself on and boot up just to play the alarm tune. After the alarm was dismissed, the phone would promptly switch itself off again.

The first phone to fail the alarm clock task was surprisingly a smartphone: the Android-powered Samsung Galaxy 3. I would set the alarm, and it would simply fail to go off in the morning. What made the issue even more baffling was that my sister had exactly the same model, and she reported no problems with the alarm clock at all. Mine was behaving oddly: sometimes the alarm would work, other times it would fail, seemingly at random. Eventually I figured out that the alarm had the best chance of working if I reset it every evening.

As I found out later, the problem had been related to the way memory management is handled in Android. If the device runs out of free memory, the system lists all the running Apps, and decides which one of them should be terminated to reclaim its memory. It would not be a problem, if it wasn't for a little fact: the alarm clock IS an App. Each time the phone runs out of memory, there is a small chance that the system will stop the alarm clock. Once stopped, the alarm clock will always fail to go off until the user re-runs the application by opening the alarm clock settings. My sister did not use as many memory-intensive applications as I did, and that is why she has never encountered this problem.

With the older phones, the alarm clock has always been a part of the operating system. Other than ensuring faultlessness of the alarm functionality, it has also made it possible to have impressive extra functionality, like the Nokia's boot-up-to-play-the-alarm thing.

Unfortunately, ever since the smartphone revolution, everything just has to be an App, and as this example shows, it's not always a good thing.

Setup

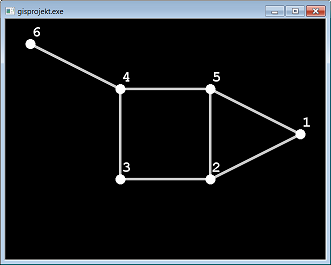

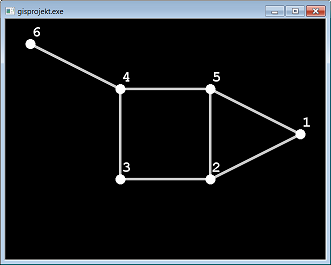

Practically all of my recent projects, both for school and otherwise, are done in high level programming languages, like Java or Python. As such I've been missing out on many fun low-level features, such as: manual memory management, pointer arithmetic, lean standard library, and of course, debugging adventures! Therefore, given the option to choose the programming language for my graphs and networks project, I immediately decided to go for the good, old C.

The project is a relatively simple application, that visually demonstrates some graph coloring algorithms, using Allegro 5 for graphics. Since the design goals include reading a lot of data from configuration files, I've also included libconfig, to avoid having to parse the files by myself. One of those files contains an adjacency matrix of unknown size, which I'm storing in a dynamically allocated char array. To avoid having to deal with dynamic two-dimensional arrays, I've implemented it as a 1-D array, with indexing done like this:

char elem = node_matrix[x + (matrix_width*y)];

The pointer to this array is stored in an AppConfig structure along with some other program settings. At this point all was well, and the development was proceeding just fine.

The Hunt

The problem appeared right after adding some code to draw the links between graph vertices: the connections were all wrong. After playing with the drawing code for a few minutes, and finding nothing wrong with it, I've added a function call to print out the adjacency matrix just before drawing the links. Lo, and behold: the matrix was garbled. I've added another call to print the matrix just after reading it from file, and at that point it was fine. By moving those two calls around in the code, I've eventually found a single line that caused the matrix to garble:

config_read_file(cfg, path);

After commenting out the line, the matrix was fine at both points. Putting it back caused the matrix to appear garbled again. Could libconfig be somehow corrupting my program's memory? A quick google search found no mention of any memory problems with the library. As a quick test, I've replaced the offending line with the following:

int *test = malloc (90000 * sizeof(int));

memset(test, 9, 90000 * sizeof(int));

It allocated around 350 kB of memory and filled it all with nines. As I suspected, with this code in place, my matrix had a few rows replaced with all nines. At that point I was certain that my matrix was being stored in an un-allocated memory. How could this happen? I took a quick look at the malloc call that was allocating memory for my matrix, and it looked fine:

ac->node_matrix = malloc( matrix_width * matrix_width * sizeof(char) ); // square matrix

I took another look at the print function, and the indexing was the same as for the code that was setting the values. I took yet another look at the code setting the values, and THEN I saw it!

ac->node_matrix[x + (ac->screen_width * y)] = (char) result;

A true facepalm moment: I've been using the screen width instead of the matrix width for indexing! I suppose that's one argument against storing all your variables in one structure.

Conclusion

The first row was always written in the correct memory, but all the other rows were written wherever the width of the application window caused them to be written, and it was most certainly outside of the allocated memory block. Since the print function and the set function used the same copy-pasted indexing, the array was consistently written to, and read from the wrong place. The values written in the unallocated memory stayed there, until some other piece of code claimed that particular address. In this case, it was the libconfig call, but it could've been anything. In C, writing values outside of the array does not generate an array-out-of-bounds exception. The code 'just works', until suddenly it doesn't. Debugging is so much fun!